Summary

Here, we describe a new acoustic cryptanalysis key extraction attack, applicable to GnuPG's current implementation of RSA. The attack can extract full 4096-bit RSA decryption keys from laptop computers (of various models), within an hour, using the sound generated by the computer during the decryption of some chosen ciphertexts. We experimentally demonstrate that such attacks can be carried out, using either a plain mobile phone placed next to the computer, or a more sensitive microphone placed 4 meters away.

Beyond acoustics, we demonstrate that a similar low-bandwidth attack can be performed by measuring the electric potential of a computer chassis. A suitably-equipped attacker need merely touch the target computer with his bare hand, or get the required leakage information from the ground wires at the remote end of VGA, USB or Ethernet cables.

Paper

A detailed account of the results and their context is given in the full version of our paper (8MB PDF). It is archived as IACR ePrint 2013/857, and appeared in the following journal paper:

Daniel Genkin, Adi Shamir, Eran Tromer, Acoustic cryptanalysis, Journal of Cryptology, to appear

[pdf] [html]This work was published at the CRYPTO 2014 conference in August 2014:

Daniel Genkin, Adi Shamir, Eran Tromer, RSA key extraction via low-bandwidth acoustic cryptanalysis, proc. CRYPTO 2014, part I, LNCS 8616, 444-461, Springer, 2014

A video of the CRYPTO 2014 talk is available, including a live demonstration of acoustic key extraction (though the on-stage setup is not visible in the video).

Context

- These results, were first published on 18 December 2013. Preliminary results were announced in the Eurocrypt 2004 rump session presentation, "Acoustic cryptanalysis: on nosy people and noisy machines", and are now archived. Progress since publication of the preliminary results is summarized in Q16 below.

This

research won the Black Hat 2014 Pwnie

Award for Most Innovative Research.

This

research won the Black Hat 2014 Pwnie

Award for Most Innovative Research.- In August 2014 we published a follow-up paper, Get Your Hands Off My Laptop: Physical Side-Channel Key-Extraction Attacks On PCs, which extends the attack to additional physical channels and (in some settings) reduces key extraction time to a few seconds.

In

February 2015 we published a follow-up paper, "Stealing

Keys from PCs using a Radio: Cheap Electromagnetic Attacks on

Windowed Exponentiation", which shows how to extract RSA

and ElGamal keys from modern implementations (that use windowed

exponentiation), in a few seconds, using cheap radio receivers.

In

February 2015 we published a follow-up paper, "Stealing

Keys from PCs using a Radio: Cheap Electromagnetic Attacks on

Windowed Exponentiation", which shows how to extract RSA

and ElGamal keys from modern implementations (that use windowed

exponentiation), in a few seconds, using cheap radio receivers.- In February 2016 we published a follow-up paper, "ECDH Key-Extraction via Low-Bandwidth Electromagnetic Attacks on PCs", about electromagnetic attacks on Elliptic-Curve Diffie-Hellman (ECDH) encryption.

- In March 2016 we published a follow-up paper, "ECDSA Key Extraction from Mobile Devices via Nonintrusive Physical Side Channels", about extracting ECDSA secret keys from mobile phones.

Q&A

Q1: What information is leaked?

This depends on the specific computer hardware. We have tested numerous laptops, and several desktops.

- In almost all machines, it is possible to distinguish an idle CPU (x86 "HLT") from a busy CPU.

- On many machines, it is moreover possible to distinguish different patterns of CPU operations and different programs.

- Using GnuPG as our study case,

we can, on some machines:

- distinguish between the acoustic signature of different RSA secret keys (signing or decryption), and

- fully extract decryption keys, by measuring the sound the machine makes during decryption of chosen ciphertexts.

Q2: What is making the noise?

The acoustic signal of interest is generated by vibration of electronic components (capacitors and coils) in the computer's voltage regulation circuit, as it struggles to supply constant voltage to the CPU.When different secret keys are used for decryption, they cause different patterns of CPU operations, which draw different amounts of power. The voltage regulator changes its behavior to maintain a stable voltage to the CPU despite the changes in power draw, and this alters the electric currents and voltages inside the voltage regulator itself. Those electric fluctuations cause mechanical vibrations in the electronic component, and the vibrations are transmitted into the air as sound waves.

The relevant signal is not caused by mechanical components such as the fan or hard disk, nor by the laptop's internal speaker.

Q3: Does the attack require special equipment?

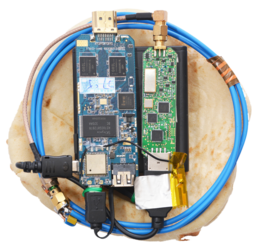

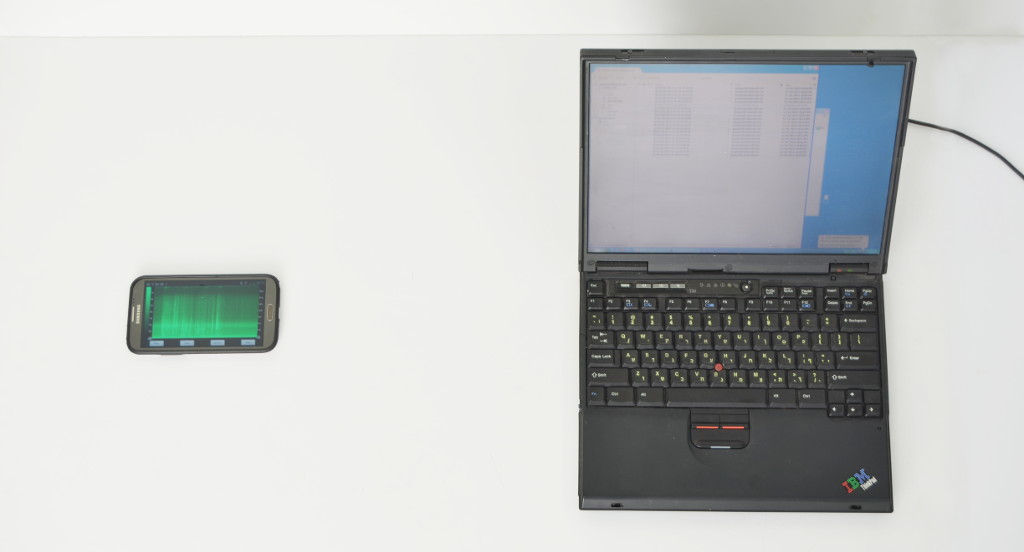

It sure helps, and in the paper we describe an expensive hardware setup for getting the best sensitivity and frequency response. But in some cases, a regular mobile phone is good enough. We have used a mobile phone to acoustically extract keys from a laptop at a distance of 30cm, as in the following picture.

Q4: What is the acoustic attack range?

That depends on many factors. Using a sensitive parabolic microphone, we surpassed 4 meters. In the following example, a parabolic microphone, attached to a padded case of equipment (power supply, amplifier, filters, and a laptop running the attack code) is extracting an RSA key from a target laptop (on the far side of the room).

Q5: What are some examples of attack scenarios?

We discuss some prospective attacks in our paper. In a nutshell:

- Install an attack app on your phone. Set up a meeting with the victim and place the phone on the desk next to his laptop (see Q2).

- Break into the victim's phone, install the attack app, and wait until the victim inadvertently places his phone next to the target laptop.

- Construct a web page use the microphone of the computer running the browser (using Flash or HTML Media Capture, under some excuse such as VoIP chat). When the user permits the microphone access, use it to steal the user's secret key.

- Put your stash of eavesdropping bugs and laser microphones to a new use.

- Send your server to a colocation facility, with a good microphone inside the box, and then acoustically extract keys from all nearby servers.

- Get near a TEMPEST/1-92 protected machine, such as the one pictured to the right, place a microphone next to its ventilation holes, and extract its supposedly-protected secrets.

Q6: What if I don't have any microphone, or the environment is too noisy?

Another low-bandwidth channel is the electric potential of the laptop's chassis. We've shown that in many computers, this "ground" potential fluctuates (even when connected to a grounded power supply) and leaks the requisite signal. This can be measured in several ways, including human touch and far end of cable attacks, which are detailed in our follow-up paper titled "Get Your Hands Off My Laptop: Physical Side-Channel Key-Extraction Attacks On PCs".

Q7: Can an attacker use power analysis instead?

Yes, power analysis (by measuring the current drawn from the laptop's DC power supply) is another way to perform our low-bandwidth attack. This too is detailed in our follow-up paper, "Get Your Hands Off My Laptop: Physical Side-Channel Key-Extraction Attacks On PCs".

If the attacker can measure clockrate-scale (GHz) power leakage, then traditional power analysis may also be very effective, and far faster. However, this is foiled by the common practice of filtering out high frequencies on the power supply.

Q8: How can low-frequency (kHz) acoustic leakage provide useful information about a much faster (GHz)?

Individual CPU operations are too fast for a microphone to pick up, but long operations (e.g., modular exponentiation in RSA) can create a characteristic (and detectable) acoustic spectral signature over many milliseconds. In the chosen-ciphertext key extraction attack, we carefully craft the inputs to RSA decryption in order to maximize the dependence of the spectral signature on the secret key bits. See also Q18.For the acoustic channel, we can't just increase the measurement bandwidth: the bandwidth of acoustic signals is very low: up to 20 kHz for audible signals and commodity microphones, and up to a few hundred kHz using ultrasound microphones. Above a few hundred kHz, sound propagation in the air has a very short range: essentially, when you try to vibrate air molecules so fast they just heat up, instead of moving in unison as a sound wave.

Q9: How vulnerable is GnuPG now?

We have disclosed our attack to GnuPG developers under CVE-2013-4576, suggested suitable countermeasures, and worked with the developers to test them. New versions of GnuPG 1.x and of libgcrypt (which underlies GnuPG 2.x), containing these countermeasures and resistant to our current key-extraction attack, were released concurrently with the first public posting of these results. Some of the effects we found (including RSA key distinguishability) remain present.Q10: How vulnerable are other algorithms and cryptographic implementations?

This is an open research question. Our attack requires careful cryptographic analysis of the implementation, which so far has been conducted only for the GnuPG 1.x implementation of RSA. Implementations using ciphertext blinding (a common side channel countermeasure) appear less vulnerable. We have, however, observed that GnuPG's implementation of ElGamal encryption also allows acoustically distinguishing keys.Q11: Is there a realistic way to perform a chosen-ciphertext attack on GnuPG?

To apply the attack to GnuPG, we found a way to cause GnuPG to automatically decrypt ciphertexts chosen by the attacker. The idea is to use encrypted e-mail messages following the OpenPGP and PGP/MIME protocols. For example, Enigmail (a popular plugin to the Thunderbird e-mail client) automatically decrypts incoming e-mail (for notification purposes) using GnuPG. An attacker can e-mail suitably-crafted messages to the victims, wait until they reach the target computer, and observe the acoustic signature of their decryption (as shown above), thereby closing the adaptive attack loop.

Q12: Won't the attack be foiled by loud fan noise, or by multitasking, or by several computers in the same room?

Usually not. The interesting acoustic signals are mostly above 10KHz, whereas typical computer fan noise and normal room noise are concentrated at lower frequencies and can thus be filtered out. In task-switching systems, different tasks can be distinguished by their different acoustic spectral signatures. Using multiple cores turns out to help the attack (by shifting down the signal frequencies). When several computers are present, they can be told apart by spatial localization, or by their different acoustic signatures (which vary with the hardware, the component temperatures, and other environmental conditions).Q13: What countermeasures are available?

One obvious countermeasure is to use sound dampening equipment, such as "sound-proof" boxes, designed to sufficiently attenuate all relevant frequencies. Conversely, a sufficiently strong wide-band noise source can mask the informative signals, though ergonomic concerns may render this unattractive. Careful circuit design and high-quality electronic components can probably reduce the emanations.

Alternatively, the cryptographic software can be changed, and algorithmic techniques employed to render the emanations less useful to the attacker. These techniques ensure that the rough-scale behavior of the algorithm is independent of the inputs it receives; they usually carry some performance penalty, but are often used in any case to thwart other side-channel attacks. This is what we helped implement in GnuPG (see Q9).

Q14: Why software countermeasures? Isn't it the hardware's responsibility to avoid physical leakage?

It is tempting to enforce proper layering, and decree that preventing

physical leakage is the responsibility of the physical hardware.

Unfortunately, such low-level leakage prevention is often impractical

due to the very bad cost vs. security tradeoff: (1) any leakage remnants

can often be amplified by suitable manipulation at the higher levels, as

we indeed do in our chosen-ciphertext attack; (2) low-level mechanisms

try to protect all computation, even though most of it is insensitive or

does not induce easily-exploitable leakage; and (3) leakage is often an

inevitable side effect of essential performance-enhancing mechanisms

(e.g., consider cache attacks).

Application-layer, algorithm-specific mitigation, in contrast, prevent

the (inevitably) leaked signal from bearing any useful information. It

is often cheap and effective, and most cryptographic software (including

GnuPG and libgcrypt) already includes various sorts of mitigation, both

through explicit code and through choice of algorithms. In fact, the

side-channel resistance of software implementations is nowadays a major

concern in the choice of cryptographic primitives, and was an explicit

evaluation criterion in NIST's AES and SHA-3 competitions.

Q15: What about other acoustic attacks?

See the discussion and references in our paper, and the Wikipedia page on Acoustic Cryptanalysis. In a nutshell:

Eavesdropping on keyboard keystrokes has been well discussed; keys can be distinguished by timing, or by their different sounds. While this attack is applicable to data that is entered manually (e.g., passwords), it is not applicable to larger secret data such as RSA keys. Another acoustic source is hard disk head seeks; this source does not appear very useful in the presence of caching, delayed writes and multitasking. Preceding modern computers is MI5's "ENGULF" technique (recounted in Peter Wright's book Spycatcher), whereby a phone tap was used to eavesdrop on the operation of an Egyptian embassy's Hagelin cipher machine, thereby recovering its secret key. Declassified US government publications describe "TEMPEST" acoustic leakage from mechanical and electromechanical devices, but do make no mention of modern electronic computers.

Q16: What's new since the Eurocrypt 2004 presentation?

- Full key extraction attack, exploiting deep internal details of GnuP's implementation of RSA

- Dramatic improvement in range and applicability (increased from 20cm with open chassis to 4m in normal operation)

- Much better hardware (some self-built), allowing longer range and better signal characterization

- Signal processing and error correction, making it possible to perform the attack using a mobile phone despite the low-quality microphone

- Many more targets tested

- Non-acoustic low-bandwidth attacks, including chassis potential analysis

- Countermeasures implemented and tested in GnuPG (see Q9)

- Detailed writeup.

Q17: What does this acoustic leakage sound like?

To human ears, the acoustic leakage typically sounds like a faint, high-pitched whining or buzzing noise (if it is at all within audible range). However, if we could hear ultrasound, it might sound like this processed audio recording of GnuPG decrypting several ciphertexts [mp3]. (To make it audible, we selected the interesting frequencies using a bandpass filter, and then downshifted them to within human hearing range.) In this recording, we can discern several pairs of tones. Each such pair is the sound made by a single RSA decryption. There are two tones per decryption because, internally, each GnuPG RSA decryption first exponentiates modulo the secret prime p and then modulo the secret prime q ? and we can actually hear the difference between these stages. Moreover, each of these pairs of tones sounds different ? because each decryption uses a different key. So in this example, by simply listening to the processed signal, we can distinguish between different secret keys,A better way to understand the signal is to visualize it as a spectrogram, which plots the acoustic power as a function of time and frequency. For example, here is another recording of GnuPG decrypting several RSA ciphertexts:

In this spectrogram, the horizontal axis (frequency) spans 40 kHz, and the vertical axis (time) spans 1.4 seconds. Each yellow arrow points to the middle of a GnuPG RSA decryption. It is easy to see where each decryption starts and ends. We also observe that the sound changes halfway through each decryption: this is the aforementioned switch from the secret prime p to the secret prime q. Most interestingly, we can see the subtle differences between these decryptions (especially near the yellow arrows) ? and thus distinguish between different secret keys.

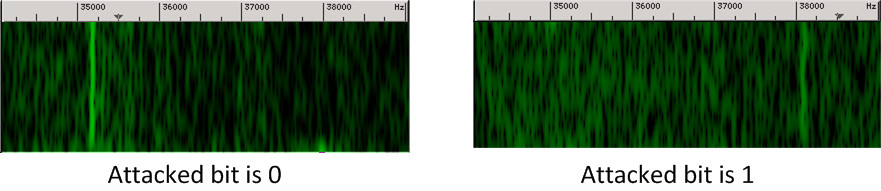

Q18: How do you extract the secret key bits?

The key extraction attacks finds the secret key bits one by one, sequentially. For each bit, the attacker crafts a ciphertext of a special form, that makes the acoustic leakage depend specifically on the value of that bit. The attacker then triggers decryption of that chosen ciphertext, records the resulting sound, and analyzes it. The following demonstrates a typical stage of this attack, focusing on a single secret key bit. If this bit is 0, then decryption of the chosen ciphertext will sound like the left-side spectrogram (with a strong component at 35.2 kHz). If the secret bit is 1, the decryption will sound like the right-side spectrogram (where the strong component is at 38.1 kHz).

Using automated signal classification, the attack tells apart these cases, and deduces the secret key bit.

Q19: Aren't sensitive devices protected by shielding?

Interestingly, many of the physical side-channel countermeasures used in highly sensitive applications, such as air gaps, Faraday cages, and power supply filters, provide no protection against acoustic leakage. In particular, Faraday cages containing computers require ventilation, which is typically provided by means of vents covered with perforated sheet metal or metal honeycomb (see Q1 above). These are very effective at attenuating compromising electromagnetic radiation (``TEMPEST''), but are nearly transparent to sound.

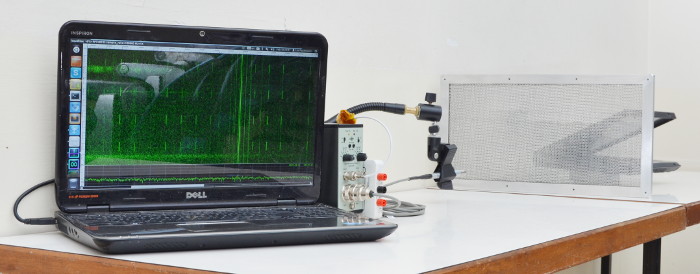

For example, the following depicts recording the acoustic emanations through a double-honeycomb metal ventilation mesh designed for blocking electromagnetic radiation. (Covering this mesh with cardboard eliminates the signal.)

Q20: What is the purpose of your research?

People entrust their most private information to computers, under the assumption that their computers will keep their secrets. Our goal is to discover when this assumption is dangerously false: to understand how information may be leaking in unexpected ways that can be used nefariously. We then seek ways to build software and hardware that will better protect the users. In this case, for example, we worked with the developers of the vulnerable software to change the mathematical operations so that the emitted sound will not reveal the secret key.

Moreover, at a philosophical level, we are intrigued by the tension between computation and computers. Most of computer science deals with computation, the abstract mathematical manipulation of numbers, with formally-designated inputs and outputs. But this computation is eventually embodied in physical computers, with myriad unexpected outputs (physical leakage) and inputs (via sensors and faults). The abstractions underlying computer science and programming, productive as they are, blind us to those phenomena. By challenging and excavating through the convenient abstractions, we gain a deeper understanding of those evanescent ensembles of energy and electrons to which we entrust our daily lives.

Acknowledgements

This work was sponsored by the Check Point Institute for Information Security; by the Israeli Ministry of Science and Technology; by the Israeli Centers of Research Excellence I-CORE program (center 4/11); and by NATO's Public Diplomacy Division in the Framework of "Science for Peace".